Posts from — March 2010

Computers’ sleight-of-hand at the LHC

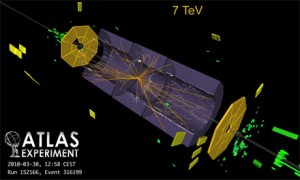

collisions recorded by ATLAS detector

YET another record tumbled at the Large Hadron Collider this week. At 12.06 BST on 30 March, 2010, the LHC smashed together protons to produce the highest energies ever recorded in a particle collider. This blog post discusses the role of computers in keeping track of particles that spew out of such collisions.

The two beams of the LHC each achieved energies of 3.5 teraelectronvolts (TeV), making the total collision reach a staggering 7 TeV.

According to the Guardian blog, Fabiola Gianotti, head of the ATLAS team, said, “We got something like 40 events per second, which is the expected rate. It’s the beginning of a new era of physics exploration.”

If physicists are excited about 40 events per second, imagine how much more thrilled they will be when the LHC reaches not only its full energy of 14 TeV, but also full intensity (which means that the beam will be chock-full of protons – which it is not at the moment). The event rate will reach a phenomenal 100 billion events per second. And it’ll be up to some very sophisticated computers to keep track of this barrage.

Here are some paragraphs from The Edge of Physics talking about just how important computers are to keep track of the collisions that are creating new particles. The paragraphs refer to the ATLAS detector, which surrounds one of the four points around the 27-kilometre-long ring where the collisions occur.

- The beams are not a continuous stream of particles; rather, each beam is made up of bunches of protons. Going around the tunnel will be 2,808 such bunches in each direction. When they cross paths, at four specific points, only about twenty of the 200 billion protons that make up the two bunches will collide; the rest will continue their journey around the accelerator unimpeded. Not much to keep track of, one might think—except when you consider that the beams are moving at near the speed of light, and thus 40 million bunches cross every second. Their collisions produce 100 billion particles per second.

- The ATLAS computers sift through this extraordinary barrage of data, looking for interesting collisions that could contain signatures of the Higgs, a neutralino, or extra dimensions. The whole thing is like a conveyor belt moving at nearly the speed of light. The computers are barely 500 feet from where the protons collide. Even as the computers are analyzing the events from one collision, data from other collisions are on their way. In the meantime, muons are streaming from the inner detector, and the muon chambers are working feverishly to track some and ignore others. The system cannot detect all the muons—there are just too many of them—so it depends on feedback from the computers to decide which ones are more important. The phrase “clockwork precision” takes on a different meaning here: Seconds, milliseconds, microseconds are too long. We are in the realm of nanoseconds. Spacetime has been sliced into the thinnest of slivers.

March 30, 2023 1 Comment

Down in the Dumps: How the LHC deals with runaway beams

It’s been heady days for the Large Hadron Collider. Its two proton beams have reached record energies of 3.5 teraelectronvolts (TeV). When the beams reach their full potential, each will have energies of 7 TeV. Just how much energy is that?

Well, as the popular account goes, each beam will carry as much energy as a 400-ton train travelling at 150 kilometres per hour. The two beams, one going clockwise and another counter-clockwise, have to be precisely controlled in their orbits around the 27-kilometre-long ring of the LHC.

Now, imagine that the beams go haywire. The LHC loses controls of the beams. What happens? Well, it can be chaos: the equivalent of these super-fast trains smashing into the nearby magnets, electronics, and sensitive detectors. A runaway beam is an accelerator physicist’s nightmare. The damage such errant beams can cause is incalculable.

Here’s a paragraph from The Edge of Physics describing the damage control mechanism:

- Such an errant beam, which is capable of melting a 500-kilogram block of copper, has to be stopped instantly, or it can wreak havoc on the delicate instruments, literally liquefying anything directly in its path. It can take weeks if not months or years to repair the damage. In a 2004 test, physicists redirected a 450-GeV beam into an auxiliary tunnel and dumped it into a target. Microphones picked up the gunshot-like bang.

How exactly do they “dump” the beam? As we saw in the last blog post, a full-intensity LHC beam will have about 3,000 bunches of protons going round and round in each direction, like carriages in a train. At one point in this train of proton bunches is a gap, where there are no protons. These gaps are used during beam dumps.

In each direction, there is a 600-metre-long straight tunnel that runs at a tangent to the LHC’s 27-km-long tunnel. At the end of the tunnel is a giant block of composite graphite surrounded by steel and concrete. This is designed to absorb the beam’s energy.

If a beam needs to be dumped, then a special deflecting magnet switches on at the moment the gap in the proton stream passes by. Now, the next bunch of protons that encounters this magnetic field is deflected into the tangential tunnel, and the beam ends up smashing into the graphite. All along the dump tunnel are other magnets that cause the beam to spread vertically and horizontally, so that when it hits the dump, its energy is spread over a larger area than if it were a focused beam.

If the two LHC beams, each at 7 TeV, have to be dumped, they will smash into their target with the sound of 150 kilograms of TNT.

March 20, 2023 No Comments

Of Beams and Bunches: The LHC record in perspective

The Large Hadron Collider reached a new milestone at 5.20 AM Central European Time, when it circulated two beams of protons – one clockwise and the other counter-clockwise – around the 27-kilometre-long ring. The beams each had energy of 3.5 tera-electronvolts (TeV).

This is a new record!

The previous record was set in November, when each beam reached an energy of 1.18 TeV.

Some more numbers to put this in perspective.

The LHC beams are considered “pilot” beams – meaning they are operating at very low intensities. When the LHC will be running at its peak, not only will each beam have an energy of 7 TeV, but each beam will be composed of 2,808 bunches of protons. When these bunches cross paths along four points of the LHC’s ring, they will collide and these collisions will produce about 100 billion particles per second.

The current 3.5 TeV beam at the LHC has only one bunch of protons per beam. What that means is that most of the beam is empty for now, as the physicists ensure that all their systems are in working order as they reach for higher energies and intensities.

According to Steve Myers, the Director for Accelerators and Technology at the LHC, they hope to reach 720 bunches per beam by the end of 2010. They will do so by slowly upping the number of bunches in each beam: 4, 16, 43, 156… and so on. Such intensities are needed to start taking meaningful data for doing physics.

In my next post, I’ll discuss the technology behind “dumping” the beam. What happens when the beam cannot be controlled? How do you prevent it from smashing into the delicate electronics and frying them?

March 19, 2023 No Comments

Planck paints a dusty masterpiece

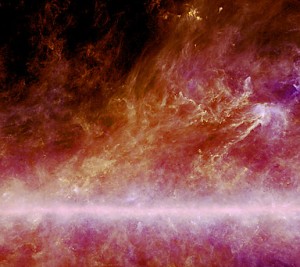

Not an abstract painting, but dust in the Milky Way

This week, the European Space Agency (ESA) released glorious maps of the dust in the Milky Way. These maps were created by the Planck satellite, which was launched last year. Its main mission is to map the cosmic microwave background, the radiation that was released when the universe was about 370,000 years old.

So, what’s dust got to do with the CMB?

Well, the thermal emissions of dust can obscure some of the CMB radiation. So, in order to precisely map the CMB, you need to characterize the dust in the Milky Way. The dust is extremely cold – just about 12 degrees above absolute zero in some places, and tens of degrees above absolute zero at its warmest (in the maps, the whiter regions are hotter, and the darker pinks are colder).

But the CMB at 2.75 K is even colder. So, this dust can be a nuisance.

NASA’s WMAP satellite – the gold standard for mapping the CMB until Planck came along – did not have the high frequency coverage necessary to map the dust effectively.

Planck can do that, and it’s doing exactly that as can be seen in this image.

See some pictures of Planck being assembled before launch here.

March 18, 2023 No Comments

Antimatter over Antarctica: Video

WHEN IT COMES TO EXTREME PHYSICS, it doesn’t get better than the long duration balloon flights from McMurdo, Antarctica. These balloons carry experiments to the upper stratosphere, from where experiments study everything from primordial antimatter to the cosmic microwave background, which is the radiation left over from the big bang.

Just some astonishing facts about the balloons:

Payload weight: About 2 tons

Balloon fabric: About 0.0008 inches thick (or thin!), like a very thin sandwich wrap

Balloon fabric weight: Despite the thin fabric, it weights almost 2 tons itself

Helium: At full cruising altitude of about 40 kilometres, the helium expands to about 1 million cubic metres. It takes an hour to fill the balloon, even with high-pressure compressed helium tanks.

Rate of ascent: About 500 feet per minute

Balloon size: At cruising altitude, when the helium has expanded fully, the balloon is about 400 feet across. Can look like half the size of the full moon from the ground.

Remember, the balloon is flown from the Ross Ice Shelf in Antarctica, so the crew has to work in sub-zero temperatures, to do all the wiring.

Here’s a video of the balloon launch (courtesy of Ryan Miller and Jessica Reynolds, who filmed it for Polar Palooza):

March 13, 2023 No Comments

It’s the magnets, stupid: Why the LHC succeeded where the SSC failed

It's the magnets, stupid

Soon the Large Hadron Collider (LHC) will attempt to reach collision energies of 7 teraelectronvolts (TeV). So, despite the early setbacks of 2008, the LHC is marching on.

It’s worth thinking about how far physics would have come had the Superconducting Super Collider (SSC) been completed. It’s even more important to think about why the SSC never got built. Ironically, it was because the SSC’s designers were not ambitious enough, at least in certain aspects of accelerator design.

The Superconducting Super Collider (SSC) was designed to achieve energies of about 40 TeV, and the tunnel to house it was to be 87 kilometers in circumference. The site chosen was near Waxahachie, in northeast Texas, about 30 miles south of Dallas. The ambitious plan was approved in 1987 by President Ronald Reagan, who used a popular sports metaphor from American football to rally the physicists (a tribe that really needs no such encouragement). “Throw deep,” he said.

So, where was the lack of ambition? Surely, 40 TeV energies and an 87-kilometre-long tunnel were ambitious enough.

To make sense of why the SSC lacked ambition, we need to look at a key aspect of particle accelerators: the magnets that create the magnetic fields to keep the particles (such as electrons and protons) confined to the beam pipe. The particles that are being accelerated want to go straight, and their trajectories have to be bent precisely by the magnetic fields, so that the particles can go round and round the tunnel. Steering a beam is not unlike driving a Formula One racing car. The faster the car, the harder it is for the driver to tackle tight bends. F1 drivers train hard to build up their arm and (especially) neck muscles to handle the turns. Magnets are the muscles of the collider world. The tighter the curve of the tunnel, the stronger the magnetic muscles need to be.

The SSC played safe when it came to the magnets. Although they chose superconducting magnets, the technology was already well-tested and not innovative. Had they designed magnets with fields that were twice as strong, they could have halved the circumference of the tunnel.

The other mistake they made was in designing a separate set of magnets for each beam, one going clockwise and the other counter-clockwise. This meant that the tunnel’s bore had to be correspondingly large, at a staggering 4.25 metres. Ultimately, it wasn’t any fancy technology that proved the SSC’s undoing. It was the cost of the civil engineering. By 1993, the cost estimates for building the SSC had ballooned from $4.4 billion to more than $12 billion. The U.S. Congress canned the project, leaving behind a 22.5 kilometer stretch of completed tunnel that now lies derelict.

So, what did the folks at CERN do when it came to the LHC? They decided to reuse the 27-kilometre-long tunnel that housed the Large Electron Positron (LEP) machine. The LEP, at its peak, achieved energies of 200 GeV. The LHC was being designed for 14 TeV collisions. How could such energetic particles be confined to such a tight orbit around the old tunnel? It all comes down to magnets. Of the more than 9,000 superconducting magnets inside the LHC tunnel, 1,232 need special mention. These are dipole magnets, and they are the machine’s neck muscles. Each weighs 35 tons, and the entire lot has to be cooled down to 1.9ºK, the temperature of superfluid liquid helium (the SSC, in contrast, used simple liquid helium at 4.5 K). It’s the immense magnetic fields created by these giant magnets at the LHC that keeps the protons confined to the beam pipe.

There was another innovation. The LEP tunnel was only 3.8-metres wide. The LHC could not afford to use two sets of cryogenically-cooled superconducting magnets — they wouldn’t fit inside the tunnel. So, the magnets for LHC were designed such that the same cryostat could house two magnets, one for the clockwise beam and the other for the counter-clockwise beam. It was a tight fit, but it worked.

March 10, 2023 No Comments

X-rays telescopes could solve the mystery of dark matter

Over the last two years, the FERMI and PAMELA satellites and the ATIC balloon-borne experiment have all tantalised us with hints of dark matter in our galactic neighbourhood. But how do we know that what they are seeing is not being produced by astrophysical sources such as pulsars? Well, a new paper suggests that advanced X-ray telescopes of the future could solve the mystery.

In August 2008, there was much hullabaloo about PAMELA and ATIC having seen an excess of positrons over the expected background of such particles in space. This excess could be coming from the mutual annihilation of dark matter particles. Even NASA’s FERMI satellite has seen such an excess. But, unfortunately, this does not constitute proof of the existence of dark matter particles in our galaxy. Such an excess can also be caused by nearby pulsars.

Now, Antoine Calvez of UCLA and colleagues are suggesting that we look at the dwarf spheroidal galaxies that hang around the Milky Way. These dwarfs should have abundant dark matter, but a paucity of pulsars. So, if dark matter is annihilating in such galaxies, then the high-energy electrons and positrons produced by the process should up-scatter – or bump up in energy – the photons of the cosmic microwave background into the X-ray energy band. So, if we see such X-rays, then it’ll constitute solid evidence that dark matter particles are creating the electrons and positrons and not pulsars.

The fly in the ointment is that today’s X-ray telescopes are nowhere near as sensitive as would be required for such observations. But, the researchers hope that the next generation of X-ray telescopes could do the trick.

March 5, 2023 No Comments

Large Hadron Collider to run on women power

women at cern. image courtesy cern

On Monday, 8 March, CERN, the particle physics laboratory near Geneva, Switzerland — the home of the Large Hadron Collider - will be handing over controls of the facility to women to mark International Women’s Day.

According to CERN’s Pauline Gagnon, all the control rooms for accelerators and experiments, including those of the Large Hadron Collider (LHC), the ATLAS and CMS detectors, will primarily be staffed by woman.

Gagnon came up with the idea to highlight the fact that women have claimed their share of the space in physics, contrary to conventional wisdom.

On the day, live video will be available at http://cern.ch/womensday.

Last year, Fabiola Gianotti took over as spokesperson for the ATLAS experiment, which is looking for the Higgs boson among other things. Gianotti is one of the physicists featured in The Edge of Physics. Some excerpts from Chapter 9: The Heart of the Matter:

- Physics wasn’t Gianotti’s first love. “I came to physics from very far away,” she told me. “When I was a young girl, I loved art and music. I had been studying piano quite seriously at a conservatory and had taken courses in high school targeted towards literature, languages like ancient Greek and Latin, philosophy, and history of art. I loved these subjects, but I was also a very curious little girl. I was fascinated by the big questions. Why are things the way they are? This possibility of answering fundamental questions has always attracted me—my mind, my spirit, everything.”

- She stumbled upon physics soon afterward. “I discovered that physics is really interested in the most fundamental questions,” she said.

- More than philosophy?

- “Even more,” she said, speaking slowly to emphasize each syllable. “Because experimental physics is based on facts. It is answering fundamental questions—not just giving an answer to your question by inventing something, but proving it. This is very, very nice.”

- This was no theorist talking. Here was someone who got down-and-dirty with instruments. These concepts—supersymmetry, dark matter, the Higgs, extra dimensions—were not mere equations to her but ideas that left traces in her instruments, whether in the form of streaking jets of particles or in some anomalous measurement of momentum or energy.

- The LHC and ATLAS could uncover some deep truths about the universe. Gianotti confessed to “feelings of excitement and the awareness of being close to something very important and great for humankind.” She quoted the thirteenth-century Italian poet Dante Alighieri: Fatto non foste a viver come bruti ma per seguir virtute et conoscenza (“We were created not to live as animals but to pursue virtue and knowledge.”) “As human beings, the pursuit of fundamental research and knowledge is a need for us, which separates us from animals or vegetables. It is like the need for art,” said Gianotti.

March 4, 2023 No Comments

From nearly winning the Nobel to farming in Italy

IT WAS TWENTY YEARS AGO TODAY…

Apologies to the Beatles, but it was twenty years ago in January 1990, that Herb Gush, a physicist at the University of British Columbia, performed a landmark measurement of the cosmic microwave background using a rocket-based experiment. Had fate sided with him and had he launched the rocket a few months earlier, Gush would have won the Nobel Prize for accurately measuring the spectrum of the CMB. Instead, he became the first to independently confirm the measurements made by NASA’s Cosmic Background Explorer (COBE) satellite, for which John Mather and George Smoot won the Nobel in 2006.

The same month that Gush launched his rocket from White Sands, New Mexico, John Mather received a standing ovation at the meeting of the American Astronomical Society in Crystal City, Virginia. His experiment on COBE had shown that the radiation leftover from the big bang had exactly the spectrum expected of black-body radiation. It was a stunning confirmation of the big bang theory.

Gush almost beat Mather to the first indisputable measurement of the CMB spectrum (after the initial discovery by Penzias and Wilson in 1965). Gush had been using rockets to launch spectrometers hundreds of kilometers into space since the 1970s. But his earlier attempts with prototype spectrometers were unsuccessful as the payload failed to stay clear of the rocket’s exhaust, messing up the measurements.

Then , in the late 1980s, Gush and graduate students Ed Wishnow and Mark Halpern were ready with a sophisticated instrument that compared the CMB spectrum with the spectrum of an on-board blackbody radiator. But in the fall of 1989, the device was damaged by the malfunctioning of a vibrator in a vibrator test before launch.

The time it took for repairs meant that the rocket launch was delayed until late January 1990. When it was finally sent up, the experiment was a success. “It was immediately clear that the spectrum was near Planckian with a temperature near 2.7K,” said Gush in an email to me in 2007.

But as luck would have it, COBE had already made the measurement. If the roles had been reversed, COBE would have confirmed Gush’s data and not the other way around. Of course, this doesn’t take anything away from COBE, which was an exquisite experiment.

Just goes to show how small the margin can be between being the first to a discovery and the second.

Gush, for his part, retired and took up farming near Palermo, Italy.

When I met cosmologist James Peebles of Princeton in 2007 for my book The Edge of Physics, Peebles was still a bit miffed that Gush didn’t share the Nobel with Mather and Smoot. “There should be a list of great measurements that were underappreciated,” he told me. “Gush was working on that experiment for more than 15 years. COBE was under development for the same length of time, and they got first data within 2 months of each other. Mather in his book is very explicit – Gush could have scooped us, and would have been famous. Instead young people don’t even know his [Gush’s] name.”

Well, here’s to Gush and his brilliant experiment.

March 2, 2023 No Comments

Tales of Russian ingenuity

Ice Fishing for neutrinos on a frozen Lake Baikal

WE HAVE ALL HEARD OF HOW NASA spend millions (or is it billions) on developing a pen that works in zero gravity, while the Russians used a pencil. A classic case of Russian ingenuity, it seemed, until it was exposed as an urban legend.

Russian ingenuity, however, is not a myth. I got to experience it first-hand while writing The Edge of Physics. One of the many trips I made for the book was to see the Lake Baikal Neutrino Telescope near Irkutsk, in Southern Siberia. The telescope is essentially long “strings” of photomultiplier tubes (PMTs) that are submerged more than a kilometer beneath the surface of the lake. PMTs can be thought of as the opposite of television tubes. A TV tube generates photons from electrical signals, while a PMT generates electrical signals from photons that hit its surface. The PMTs deep in the waters of Lake Baikal are looking for the blue Cherenkov light that is emitted when a neutrino hits a molecule of water.

So, where does Russian ingenuity come in? Well, for starters, they have figured out a way of deploying these detectors without the use of expensive ships and submersibles (as they do for the neutrino telescopes being built in the Mediterranean Sea). The Russians wait for Lake Baikal to freeze over, and then during the peak of the Siberian Winter, they establish an ice camp on top of the frozen lake. They bring their cranes and winches and the like, haul out their telescope from the depths of Lake Baikal, do the necessary maintenance and repairs, and get out of there before the ice melts.

Using this unusual and extremely hazardous mode of operation, they have managed to build the world’s first underwater neutrino telescope and run it for twenty years with only about $20 million. The other neutrino detectors, either underwater or embedded in the ice (such as at the South Pole), are costing hundreds of millions of dollars.

But the most telling illustration of Russian ingenuity – of course, necessitated by lack of resources sometimes, but worth appreciating regardless – was to do with the retrieval of a string that got cut one winter and sank to the bottom of the lake. Here’s a description of it from The Edge of Physics:

- The Lake Baikal neutrino telescope is made of eleven strings of photomultiplier tubes—each with a large buoy at the top and a counterweight at the bottom—that float nearly 1.1 kilometers below the surface (the water here is a staggering 1.4 kilometers deep, enough for a building three times as tall as New York’s Empire State to sink without a trace). Smaller buoys attached to the strings float about 10 meters below the surface. All year round, a total of 228 PMTs watch for the Cherenkov light created by neutrinos, monitoring 40 megatons of water. Each winter, once the ice camp has been set up, the team has to locate the telescope, the upper part of which drifts slightly over the course of the year. A diver plunges into the ice-cold water to locate the small buoy fixed to the center of the telescope. Then the researchers cut holes in the ice above each string (whose positions they know relative to the center) and attach a winch to the small buoys to haul up the strings. The team has two months to carry out any routine maintenance, put the strings back in the water, and get out before the ice cracks. They have perfected their technique; only once in two decades of operation did they have a problem retrieving a string. In 1994, a rusty metal cable broke, severing the buoy from its string, causing the string to sink to the bottom.

- Physicist Nikolai Budnev retrieved it. Diving that deep was out of the question, but Budnev knew that the string—though its counterweight was on the lake bed—would still be vertical because of the buoyancy of the PMTs. What he did next was ingenious. He fashioned a propeller and tied it to the end of a long rope, dropping the propeller into the water. The angle of the blades was such that as the propeller sank it started rotating, making huge circles. Budnev used this simple tool to sweep the waters below. Soon, the propeller snagged the errant string, and the team pulled it up.

I can confidently say that the Russians (and the Germans who worked alongside them) at Lake Baikal are amongst the toughest bunch of physicists I have encountered.

Here are some pictures of my trip to Lake Baikal.

March 1, 2023 No Comments