Posts from — January 2010

Does physics win if Hawking loses bet?

A STORY this week in New Scientist (which I wrote) looks at the likelihood of the European Space Agency’s Planck satellite detecting gravitational waves generated during inflation. Stephen Hawking’s wager with Neil Turok adds a touch of spice to the tale. At a meeting in Cambridge last August on primordial gravitational waves, Hawking reiterated his 2002 bet with Turok, arguing “that primordial gravitational waves will be observed, with a ratio of tensors to scalars, of above 5%.”

Basically, Hawking is betting that inflation would have generated gravitational waves strong enough to be detected by Planck (not directly, but as imprints in the cosmic microwave background). Inflation is that episode in the history of the universe – just a fraction of a second after the big bang – when the universe expanded exponentially. Inflation is needed to explain why the universe is flat and homogeneous, and is thought to have occurred when a field called the inflaton suffused spacetime and changed very slowly (physicists talk of the inflaton rolling down a gently-sloping potential hill). This caused spacetime to literally blow up. It was a very violent period, leading to the roiling of spacetime and the generation of gravitational waves.

Turok, on the other hand, argues that inflation never occurred. He and Paul Steinhardt explain the observed properties of the cosmos using their cyclic universe model, which posits that the universe cycles through a series of big bangs and big crunches. In their model, the gravitational waves generated in the early universe would not have been strong enough to leave a detectable imprint on the CMB.

But it’s possible that inflation could have occurred, and yet the gravitation waves would not have been strong enough for Planck to see. So, Hawking could still lose the bet, without Turok and Steinhardt being right about the cyclic universe model.

How could that happen? Well, the New Scientist story explains one physicist’s take on it. Qaisar Shafi of the University of Delaware worked out models of inflation in which the inflaton field rolls down a potential that’s similar to the potential of the Higgs field. Add to that the fact that at the end of inflation, the inflaton field has to interact with standard model fields to reheat the universe and give rise to the standard model particles. One consequence of this is that the inflation-generated gravitational waves would not be strong enough for Planck to detect.

The strength of gravitational waves could also be lower if the universe is supersymmetric, an idea that says that there is a super-partner particle for every known standard model particle (see New Scientist feature on supersymmetry). Say, the Large Hadron Collider detects supersymmetry at the teraelectronvolt (TeV) scale. What this would mean is that the gravitino (the super-partner of the graviton) is only about a thousand times heavier than a proton. And this has implications for inflation: a gravitino with such a mass means that the energy scale of the universe at the end of inflation was also small, and as such, any inflation-generated gravitational waves would not be within reach of Planck.

I suspect most physicists would rather find evidence for supersymmetry at the LHC and forego the detection of inflation-generated gravitational waves until a future, more sensitive experiment is ready.

Why? Well, supersymmetry is the most likely candidate for physics beyond the standard model (and we know there has to be physics beyond the standard model). Finding evidence of supersymmetry would help physicists chart a course towards a theory of quantum gravity.

Finding evidence of inflation-generated gravitational waves, while extremely exciting and genuinely gratifying, would probably not help physics in the same earth-shaking manner as the discovery of supersymmetry. It would help fix the energy scale of inflation and maybe even provide clues to whether models of inflation built using string theory are on the right track. But it would not have the same potential for steering physics in a new direction as the sighting of a supersymmetric particle at the LHC.

That’s a roundabout way of saying that it’s better for physics that Hawking loses his bet and the LHC finds supersymmetry.

January 29, 2023 No Comments

From KAT to MeerKAT

SOUTH Africa has taken a giant step towards fulfilling it’s ambitions of hosting the Square Kilometre Array (SKA) - the world’s largest radio telescope.

picture credit: The SKA South Africa team

Last month, radio astronomers obtained interference fringes from data collected by two dishes of the Karoo Array Telescope (KAT). When complete, the KAT will be a 7-dish array, and is itself a precursor to the 80-dish MeerKAT (Meer in Afrikaans means “more”, and of course, the meerkat calls the Kalahari Desert its home, a region just north of the vast semi-arid Karoo, where the radio telescope is being built).

THe KAT is expected to be completed by the end of January, and will boast of innovative antennas made of composite materials. If South Africa wins the bid to host the Square Kilometre Array, the KAT/MeerKAT designs may well be used to build the 3000 antennas of the SKA.

For some pictures of my trip to the Karoo to see where the SKA could be built, see Chapter 6 of The Edge of Physics.

January 15, 2023 No Comments

X-ray vision for dark matter

If dark matter is made of particles called super-WIMPS (what a strange juxtaposition of words), then X-ray telescopes could potentially provide unambiguous evidence for these particles, not just in our galaxy, but even in nearby dwarf and satellite galaxies, and maybe even Andromeda.

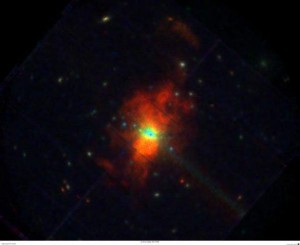

XMM-Newton image of M82 galaxy in X-rays. Image courtesy of P. Ranalli and ESA.

At least that’s what Alexey Boyarsky of the Ecole Polytechnique Federale de Lausanne in Switzerland and colleagues think. In their analysis, super-WIMPs would decay into telltale X-ray photons. Given that we know next to nothing about exactly what makes up dark matter, it’s an interesting line of thinking to follow, especially given recent analysis of data from the FERMI satellite that supports decaying dark matter.

Before we get to super-WIMPS, a brief word about WIMPs, or weakly interacting massive particles. These are the physicists’ favourites when it comes to explaining dark matter. They arise naturally in extensions to the standard model of particle physics such as supersymmetry. The lightest supersymmetric particle in many models is the neutralino. This hypothetical particle is stable and an enticing candidate to be dark matter, because it satisfies many of the theoretical requirements.

Many direct detection experiments (such as CDMS and Xenon10) are looking for the signature of these WIMPs hitting their detectors. None have been sighted unambiguously yet. Satellites like FERMI and PAMELA are looking for indirect evidence, in the form of positrons or gamma-rays expected from the annihilation of these particles in regions where they exist in high concentrations (say, the centre of the Milky Way).

There is another class of particles that come out of theories that extend the standard model, including supersymmetry and some motivated by string theory, that interact even more weakly with normal matter than WIMPs. Hence the odd name of super-WIMPs. Turns out that these super-WIMPS can decay into a photon and a neutrino or two photons. In the latter case, the photons will have an energy equal to half the mass of the dark matter particle.

Now, these super-WIMPs are ostensibly stable and long-lived – lasting much longer than the life of the universe. So, how is one to look for their decay products? Well, if you study regions where dark matter concentrations are high, then it becomes likely that you might catch some of them decaying.

Most importantly, the decay signal depends on the column density of dark matter – the summation of the dark matter along the line of sight. Since this column density will differ from place to place and from astrophysical object to astrophysical object, one can work out what can be expected from various observations.

So, say you see some tantalising X-ray emissions from one of Milky Way’s satellites. If you think it’s coming from dark matter, then you do the relevant calculations and based on that predict what you should see from other sources, such as the Andromeda Galaxy. Seems like quite a robust experiment.

Boyarsky et. al. used just such an analysis to rule-out claims that dark matter decay lines had been seen from Willman 1, a Milky Way satellite. They used archival data from the XMM-Newton and Chandra X-ray satellites to search for similar lines in other sources for the study.

This does not mean that decaying dark matter is ruled out entirely. Our searches are not sensitive enough yet. But with a new generation of spectrometers with large field-of-view and high energy resolution, the hunt is going to get more sophisticated.

January 8, 2023 No Comments

Looking back at alpha

IT’s the New Year, and one favourite activity is to look back at the past year, or even the past decade. Cambridge University’s John Barrow bettered everyone by looking back about 11.8 billion years. In a paper submitted to the physics preprint server 30 December, he reviews theories that support varying alpha (the fine-structure constant) in light of earlier observations of quasars at redshifts of up to 3.5 (objects that formed about 1.8 billion years after the big bang, according to Ned Wright’s cosmology calculator).

credit: NASA/ESA/ESO/Wolfram Freudling et al. (STECF)

Why should we care whether alpha varies or not? Well, for one it’s regarded as a fundamental constant of nature, so any evidence that it has changed with time is bound to be a rather big deal for physics.

Barrow details heady notions as to why we should care. For instance, our best bets for a theory of quantum gravity – which combine general relativity with quantum mechanics – seem to require higher dimensions to work correctly. Barrow writes, “This means that the true constants of nature are defined in higher dimensions and the three-dimensional shadows we observe are no longer fundamental and need not be constant. Any slow change in the scale of the extra dimensions would be revealed by measurable changes in our three-dimensional ’constants’.”

And astronomers have been interested in seeing if alpha (a dimensionless constant that characterises the strength of the electromagnetic force and depends on the Planck constant and c, the speed of light) has changed with time. The technique relies on looking at the separation of spectral lines from distant gas clouds that are illuminated by light from quasars behind them. The separation is sensitive to the value of alpha. A study of 143 such quasar absorption systems using the Keck-I telescope on Mauna Kea, Hawaii, and the HIRES spectrograph, suggests that alpha was a smidgen smaller early in the life of the universe.

Bear in mind that no other telescope/spectrograph combination has confirmed this result. Of course, neither have they ruled it out.

Future astronomical observations and even laboratory experiments will continue to probe the constancy of alpha.

If doubts arise as to the importance of such experiments, Barrow reminds us that “It is sobering to remember that at present we have no idea why any of the constants of Nature take the numerical values they do and we have never successfully predicted the value of any dimensionless constant in advance of its measurement.”

A lovely note on which to welcome the New Year.

January 2, 2023 No Comments